The

Responsive City: AI Agents Revolutionizing iOS Development for Education

and Healthcare

Introduction:

The Dawn of Agent-Driven iOS Innovation

The digital landscape is undergoing a profound transformation, with

Artificial Intelligence (AI) agents emerging as pivotal players in

various sectors. This shift is particularly impactful in software

development, where AI is not just augmenting human capabilities but also

demonstrating potential for autonomous creation. As iOS continues to

dominate the mobile app market, the convergence of AI agents and iOS

development promises a new era of innovation. This article explores how

AI agents can revolutionize iOS app development, with a specific focus

on their potential to create transformative applications for the

critical fields of education and healthcare.

AI Agents in

Software Development: A Paradigm Shift

Generative AI (GenAI) is rapidly redefining the software development

lifecycle (SDLC), offering unprecedented boosts in productivity, speed,

and quality. Far from mere tools, GenAI systems are evolving into

sophisticated collaborators and, in some cases, autonomous agents

capable of performing complex development tasks.

Key areas where GenAI is making an impact include:

- Code Generation and Autocompletion: Tools like

GitHub Copilot and similar LLM-powered assistants can generate code

snippets, complete functions, and even suggest entire algorithms,

significantly accelerating the coding process. - Testing and Debugging: AI agents can analyze

codebases, identify potential bugs, generate test cases, and even

suggest fixes, leading to more robust and reliable software. - Requirements to Deployment: From transforming

initial ideas into detailed requirements and user stories, to generating

wireframes, creating documentation, and even assisting with deployment

strategies, AI is touching every stage of development. - Autonomous Agent Collaboration: The future

envisions AI agents communicating and collaborating, autonomously

understanding requirements, breaking down problems, and generating code.

These agents are expected to self-improve, continuously upgrading their

algorithms and strategies based on vast datasets and feedback

loops.

While these advancements are broad in their application, their

principles are directly transferable to the specialized world of iOS

development, paving the way for a new generation of smart,

agent-developed applications.

The

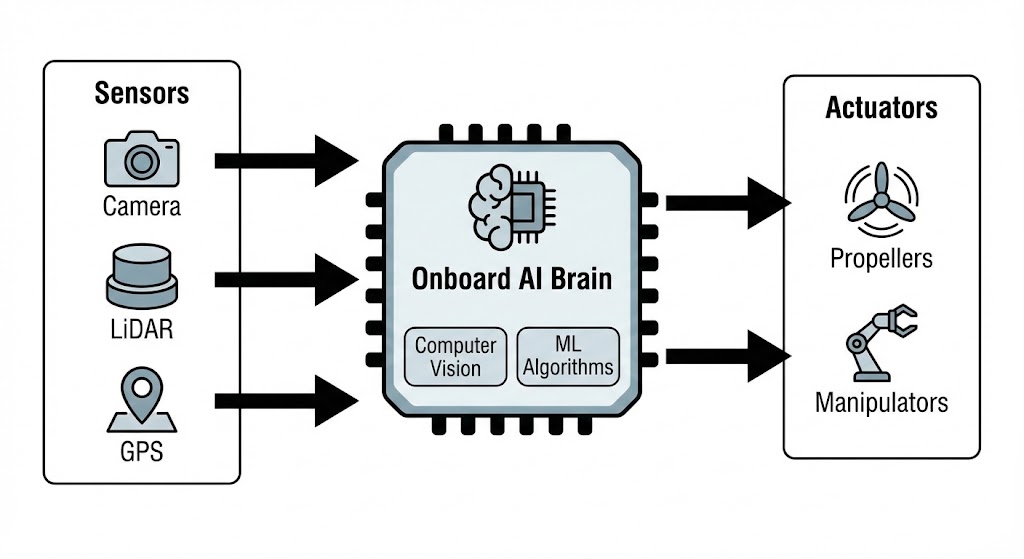

iOS Landscape for AI: Building Blocks for Agent-Driven Apps

Apple’s ecosystem, with its robust development tools and powerful

on-device machine learning frameworks (such as Core ML), provides a

fertile ground for AI agent-driven development. While specific “AI agent

develops iOS app” scenarios are still nascent, the underlying

technologies are well-established. These frameworks allow developers to

integrate machine learning models directly into their applications,

enabling features like image recognition, natural language processing,

and predictive analytics to run efficiently on Apple devices. The

forthcoming advancements in generative AI are expected to integrate

seamlessly with these capabilities, empowering agents to design, build,

and optimize iOS applications with greater autonomy.

Transforming

Education with Agent-Developed iOS Apps

The integration of AI into education is already transforming learning

experiences. With AI agents capable of contributing to app development,

the creation of highly personalized and adaptive educational iOS

applications can reach new heights. Imagine agents designing apps

that:

- Offer Hyper-Personalized Learning Paths: AI agents

could develop apps that adapt to each student’s unique learning style,

pace, and knowledge gaps in real-time. Examples from current AI in

education include platforms like DreamBox and Smart Sparrow, which

dynamically adjust lessons. Agent-developed apps could take this

further, offering bespoke content generation. - Automate Administrative and Assessment Tasks: Apps

created by agents could streamline grading, scheduling, and report

generation, freeing educators to focus more on teaching. Automated

assessment tools already exist, but agent-driven development could lead

to more nuanced and adaptive assessment methods integrated directly into

learning apps. - Provide Intelligent Tutoring and Support:

Agent-developed iOS apps could feature advanced chatbots and virtual

assistants, offering 24/7 personalized feedback, answering questions,

and providing support tailored to individual student needs, similar to

current systems like Carnegie Learning or Mainstay. - Generate Engaging Educational Content: AI agents

could create interactive lessons, simulations, and gamified content

directly within educational apps, fostering deeper engagement and

understanding. Tools like Magic School AI and Eduaide.AI already assist

in content creation, and agents could automate the app-integration of

such generated content. - Enhance Accessibility: Agents could develop

inclusive apps with integrated assistive technologies, such as advanced

speech recognition, real-time transcription, and personalized interfaces

for students with diverse learning needs, building upon existing tools

like Notta.

Revolutionizing

Healthcare with Agent-Developed iOS Apps

In healthcare, AI offers immense potential to improve diagnostics,

treatment, and patient care. With AI agents contributing to iOS app

development, we could see an acceleration in the creation of powerful,

intelligent health applications:

- Personalized Health Management and Monitoring: AI

agents could develop iOS apps that integrate with wearables and sensors

to provide continuous, personalized health monitoring. These apps could

analyze multimodal data (genomics, clinical, phenotypic) to predict

health risks, suggest preventative measures, and offer tailored wellness

programs. The concept of “AI-augmented healthcare systems” where AI

democratizes and standardizes care becomes more tangible. - Advanced Diagnostic and Predictive Tools: Agents

could build mobile applications that assist in early disease detection

by analyzing patient data from various sources. Examples include AI in

precision imaging (diabetic retinopathy screening) and predictive

analytics for conditions like Alzheimer’s. - Virtual Care Assistants and Chatbots:

Agent-developed apps could feature sophisticated virtual assistants and

AI chatbots for symptom assessment, medical information, and mental

health support. Apps like Babylon and Ada already demonstrate this, but

agents could develop more context-aware and empathetic digital

companions. Ethical considerations around empathy and accuracy,

highlighted by studies on tools like ChatGPT in medical contexts, would

be paramount. - Drug Interaction and Medication Management: AI

agents could develop apps that use natural language processing to

identify drug-drug interactions, assist with medication adherence, and

provide personalized dosing recommendations based on a patient’s unique

profile. - Automated Administrative Support: Beyond clinical

uses, agents could create apps that automate administrative tasks within

healthcare settings, improving workflow efficiency for medical

professionals. - Remote Patient Monitoring and Telemedicine: Agents

could develop iOS apps that facilitate enhanced telemedicine services,

allowing for remote monitoring of vital signs and patient status,

especially crucial for chronic disease management and for expanding

access to care in underserved areas.

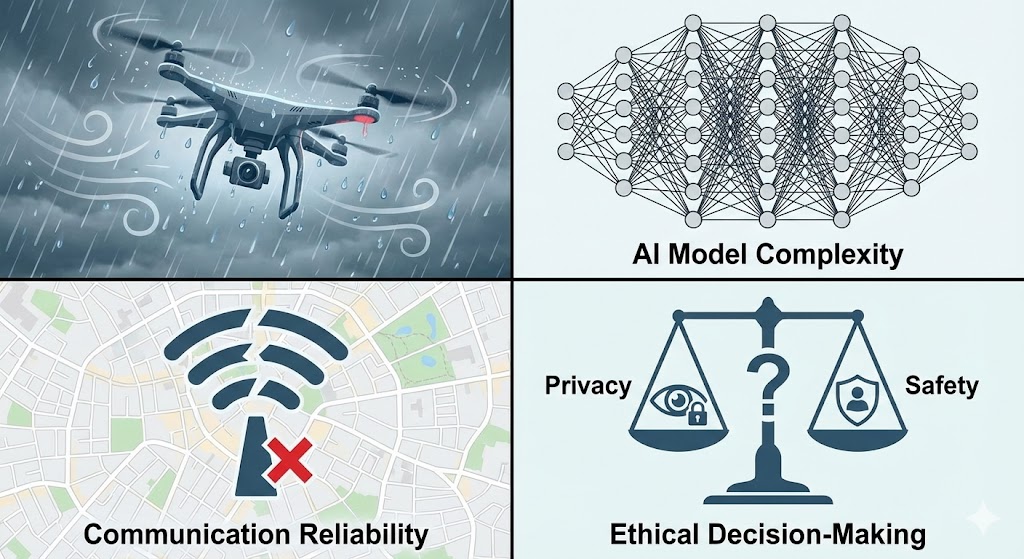

Challenges and

the Indispensable Human Element

While the vision of AI agents developing iOS apps for education and

healthcare is compelling, it is not without significant challenges:

- Ethical Considerations: The development of AI

agents for such sensitive fields necessitates rigorous ethical

frameworks. Bias in algorithms, data privacy (especially with HIPAA and

GDPR compliance), and the need for human oversight to ensure fairness,

accountability, and empathy are critical. The potential for AI to

provide harmful advice, as seen in some chatbot therapy instances,

underscores this. - Data Quality and Access: AI’s effectiveness relies

heavily on high-quality, diverse datasets. In education and healthcare,

obtaining and utilizing such data responsibly presents complex

logistical and ethical hurdles. - Technical Infrastructure and Integration: The

seamless integration of AI agents into existing development pipelines

and healthcare/education systems requires robust technical

infrastructure and interoperability standards. - Regulatory Landscape: The rapidly evolving nature

of AI often outpaces regulatory frameworks. Clear guidelines are needed

for AI-powered medical devices and educational tools. - The Human-AI Partnership: Critically, AI agents are

envisioned to augment, not replace, human intelligence. Skilled human

engineers, educators, and healthcare professionals will remain

indispensable for defining requirements, overseeing agent outputs,

ensuring clinical validity, and providing the nuanced human judgment and

empathy that AI currently lacks. The role shifts from direct coding to

guiding, validating, and iterating with AI collaborators.

Conclusion: A

Future Forged by Collaboration

The era of AI agents in iOS development for education and healthcare

is rapidly approaching. While technical and ethical challenges abound,

the potential for these intelligent systems to democratize access to

personalized learning and revolutionize patient care is immense. The

future will not be about AI agents working in isolation, but rather a

powerful collaboration between human ingenuity and artificial

intelligence, forging a new generation of iOS applications that truly

enhance human potential in these vital sectors. The journey requires

careful navigation, but the destination promises a more responsive,

equitable, and intelligent world.